Adversarial attacks represent one of the most critical challenges for modern AI security. Discover how these invisible attacks work and the defense strategies that are redefining artificial intelligence development.

In the world of artificial intelligence, a silent battle is fought every day between increasingly sophisticated attack and defense systems. Adversarial attacks represent one of the most insidious threats to AI models, capable of deceiving even the most advanced systems with modifications imperceptible to the human eye.

Understanding Adversarial Attacks

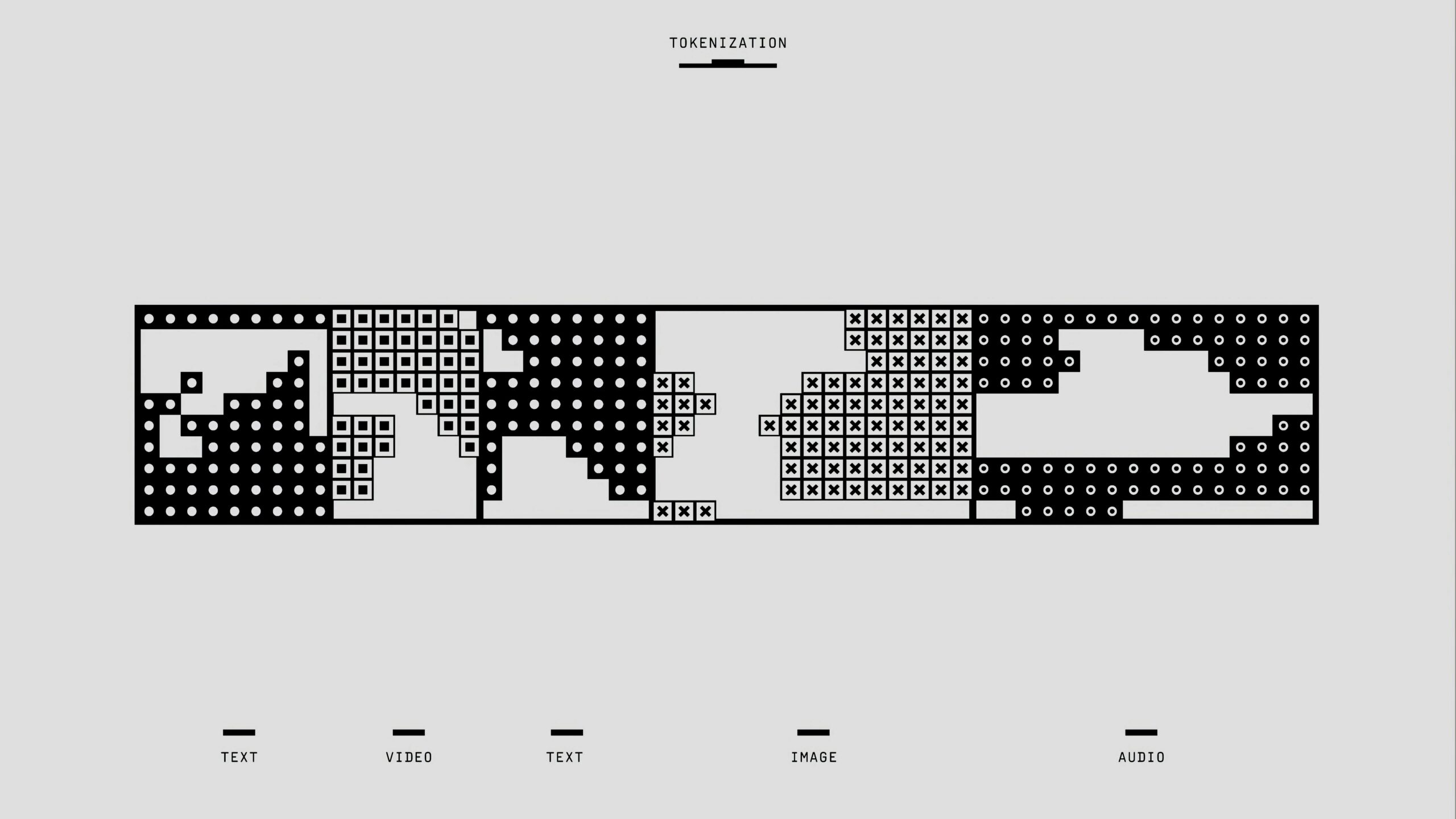

An adversarial attack consists of slightly modifying an input to cause classification errors or unwanted behaviors in artificial intelligence models. These modifications, called adversarial perturbations, are often so subtle as to be invisible to humans, yet sufficient to completely confuse an AI system.

Imagine a stop sign that, with the addition of some seemingly innocent stickers, is interpreted by an autonomous car as a speed limit sign. This scenario, far from being science fiction, represents a concrete reality that researchers are addressing with growing urgency.

Types of Adversarial Threats

Adversarial attacks manifest in various forms, each with specific characteristics and objectives:

- White-box attacks: The attacker has complete access to the target model, including parameters and architecture

- Black-box attacks: The attacker can only observe system outputs without knowing the internal structure

- Physical attacks: Modifications applied in the real world, such as stickers on objects or patterns on clothing

- Digital attacks: Manipulation of digital data such as images, audio, or text

At-Risk Sectors and Implications

Adversarial vulnerabilities have particularly critical implications in sensitive sectors. In the automotive sector, artificial vision systems in autonomous cars could be deceived by strategic modifications to road signage. In the field of cybersecurity, facial recognition systems could be evaded with specific patterns applied to faces.

The medical sector is not immune either: diagnostic algorithms for medical imaging could be manipulated to hide pathologies or create false ones, with potentially fatal consequences for patients.

Defense Strategies and Robustness

Research in adversarial defense focuses on several innovative strategies:

- Adversarial Training: Training models using adversarial examples to increase their robustness

- Defensive Distillation: Technique that makes models less sensitive to perturbations

- Certified Defenses: Methods that mathematically guarantee robustness within certain parameters

- Detection Systems: Algorithms specialized in identifying adversarial inputs

The Future of AI Security

The evolution of adversarial attacks is pushing the AI industry toward greater security awareness. Companies are investing heavily in red teaming – teams dedicated to testing the robustness of their systems – and in development methodologies that integrate security from the initial design phases.

This battle between attack and defense is not destined to end soon, but it is catalyzing innovations that will make artificial intelligence more robust, reliable, and secure for all users. Awareness of these vulnerabilities represents the first step toward a future where AI can operate safely even in the most critical environments.