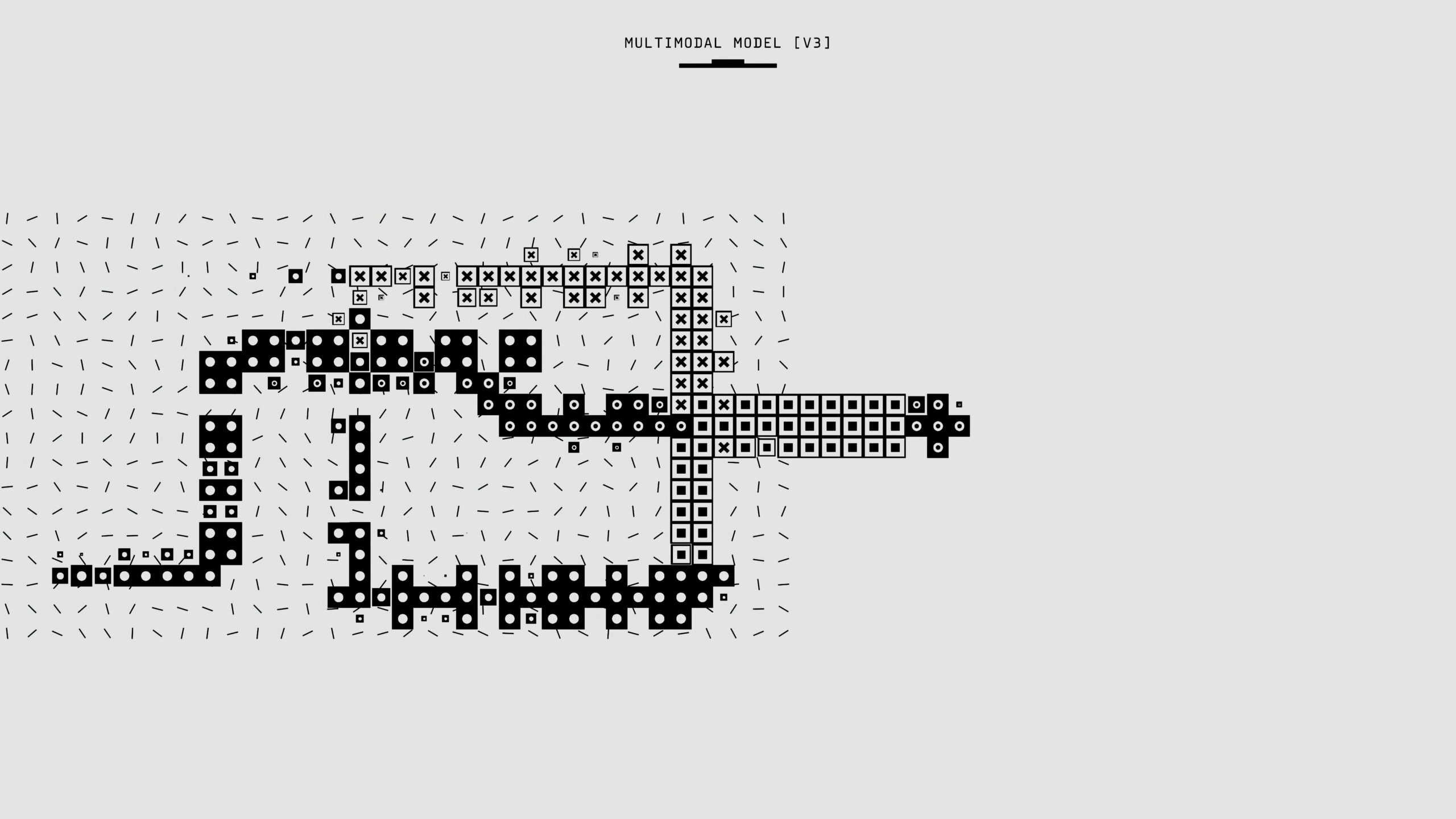

Multimodal artificial intelligence represents the next frontier of AI, capable of simultaneously processing text, images, audio, and video. This technology is revolutionizing how machines interpret and interact with the real world.

Multimodal artificial intelligence is emerging as one of the most promising innovations in today’s technological landscape. Unlike traditional AI systems that focus on a single type of data, multimodal AI can process and integrate multiple input modalities simultaneously: text, images, audio, video, and even sensory data.

What Makes Multimodal AI Special

The true power of multimodal AI lies in its ability to create meaningful connections between different types of information. When a system can “see” an image, “hear” the associated audio, and “read” contextual text, it can develop a much richer and more nuanced understanding of the situation compared to unimodal systems.

This multi-sensory integration more faithfully replicates how humans perceive and interpret the world, making AI more natural and intuitive in user interactions.

Revolutionary Applications

Multimodal AI applications are already transforming various sectors:

- Advanced Virtual Assistants: capable of understanding voice commands while analyzing the visual context of the environment

- Medical Diagnosis: integration of diagnostic images, clinical data, and verbally described symptoms

- Autonomous Vehicles: fusion of data from cameras, sensors, GPS, and maps for safer navigation

- Intelligent E-commerce: product search through photos, voice descriptions, or input combinations

- Personalized Education: content adaptation based on performance, preferences, and multimodal learning styles

Technical Challenges and Innovations

Developing multimodal AI presents unique challenges that researchers are addressing with innovative approaches. Temporal synchronization between different modalities, handling missing or incomplete data, and computational optimization are just some of the complex problems to solve.

Transformer architectures, already revolutionary in natural language processing, are showing excellent results in multimodal processing as well, enabling the creation of unified models capable of handling heterogeneous inputs.

The Future of Human-Machine Interaction

Multimodal AI promises to make technology interaction more natural and intuitive than ever. Imagine being able to show a photo to your AI assistant, verbally describe what you’re looking for, and receive contextual responses that account for all these elements.

This evolution represents not just an incremental improvement, but a qualitative leap toward AI systems that can truly understand and respond to the world in its multidimensional complexity, paving the way for innovations we can only imagine today.