Multimodal artificial intelligence represents the next evolutionary leap in AI, combining text, images, audio, and video for a more complete and natural understanding of the world. This technology promises to revolutionize human-machine interaction and open new frontiers in intelligent automation.

Artificial intelligence is taking a fundamental evolutionary step: the ability to simultaneously process and understand different input modalities, just like the human brain does. Multimodal AI represents this new frontier, where intelligent systems can process text, images, audio, video, and other types of data in an integrated and coherent way.

What is Multimodal AI

Unlike traditional systems that specialize in a single type of data, multimodal AI can analyze and correlate information from different sensory sources. A multimodal system can, for example, watch a video, listen to its audio, read subtitles, and understand the complete context of the scene, exactly as a human would.

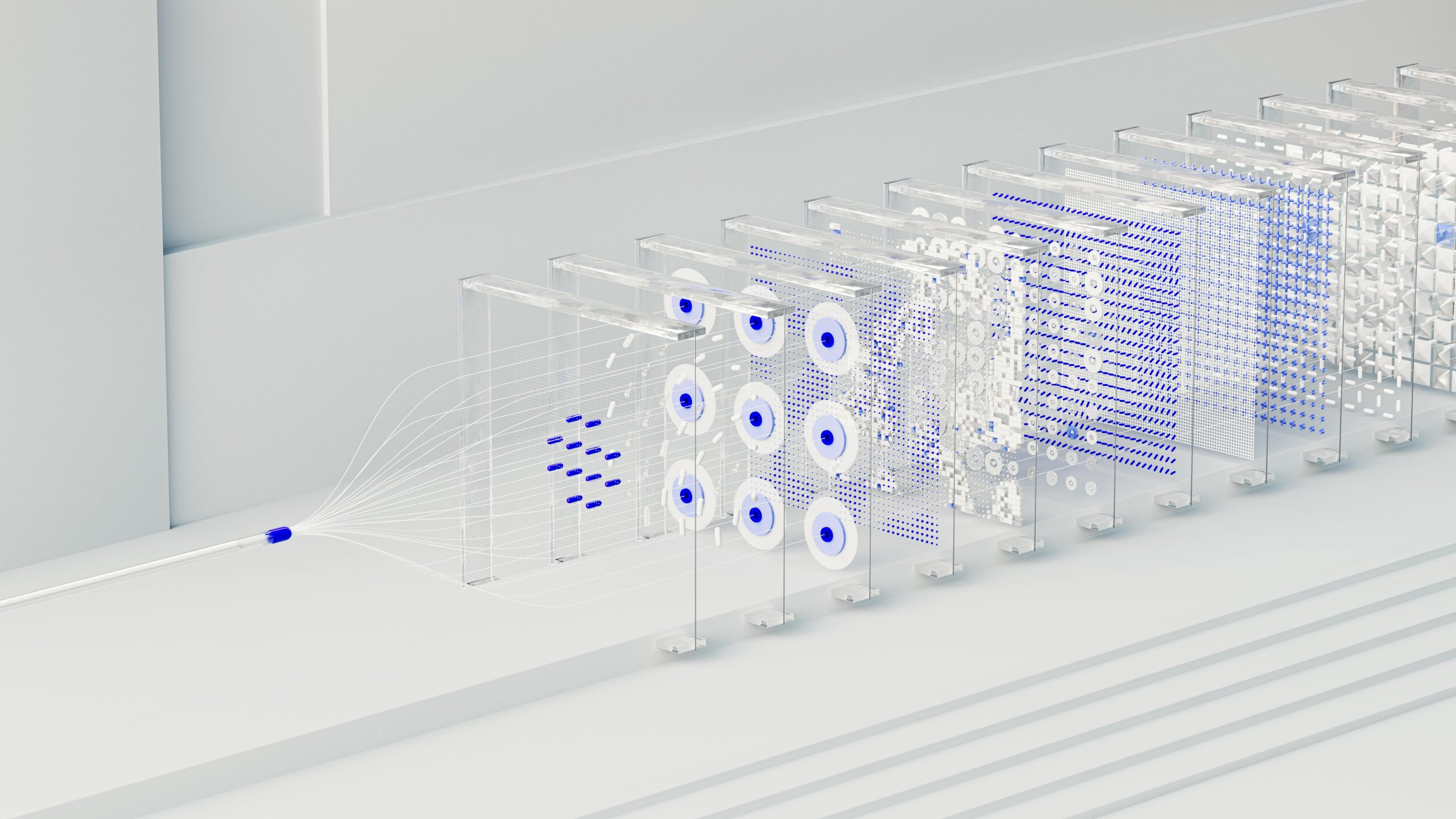

This capability emerges from the integration of specialized neural networks working in synergy, sharing internal data representations and creating a unified understanding of the received input.

Revolutionary Applications

Multimodal AI applications are already transforming various sectors:

- Advanced virtual assistants: Systems that can see what you’re doing, hear your voice, and respond in a contextually appropriate manner

- Diagnostic medicine: Simultaneous analysis of medical images, verbally described symptoms, and clinical data for more precise diagnoses

- Autonomous vehicles: Combined processing of visual, radar, lidar, and audio data for safer navigation

- Personalized education: Systems that adapt teaching by analyzing facial expressions, vocal responses, and tactile interactions

Technical Challenges

Developing multimodal AI presents unique challenges. Temporal synchronization between different modalities, managing heterogeneous data, and semantic alignment require sophisticated architectures. Researchers are working on multimodal transformers and cross-modal attention networks to overcome these obstacles.

Another significant challenge concerns training datasets: creating data corpora that include aligned multimodal information is complex and expensive, but essential for training robust models.

The Future of Human-Machine Interaction

Multimodal AI promises to make interaction with technology more natural and intuitive. Imagine being able to show an AI system a broken object, verbally describe the problem, and receive personalized repair instructions complete with diagrams and explanatory videos.

This technology is also opening new possibilities in augmented reality and social robotics, where multimodal understanding is essential for creating engaging experiences and natural interactions.

As we continue to refine these technologies, multimodal AI emerges as the bridge to a future where machines don’t just process information, but truly understand the world in its multisensory richness.